Core Event

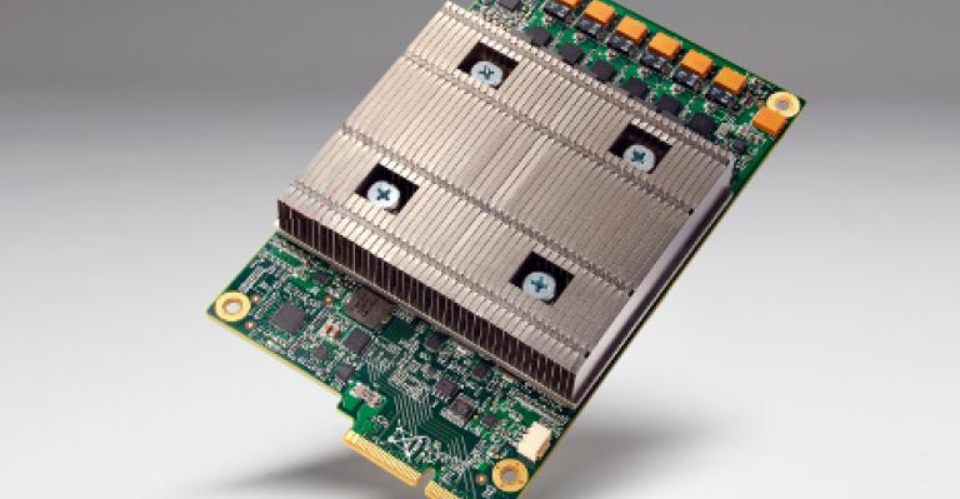

On April 22 local time, Google officially released two artificial intelligence chips—the TPU 8t and TPU 8i—at the Cloud Next 2026 conference in Las Vegas.

This marks Google’s first-ever separation of AI training and inference tasks into distinct processors, representing a significant strategic transformation in the company’s AI chip domain.

Technical Breakthrough: First Separation of Training and Inference

For years, Google’s AI chips could handle both model training and inference simultaneously. However, with the rapid development of AI agents, market demands for computing power have become increasingly diverse and refined.

Google Senior Vice President and Chief Technology Expert for AI and Infrastructure Amin Vahdat stated: “With the rise of AI agents, we believe the community will benefit from chips optimized separately for training and serving needs.”

Based on this insight, Google implemented hardware separation between training and inference for the first time in its 8th generation TPU.

TPU 8t: Training Focus

The TPU 8t is specifically optimized for AI model training, capable of reducing frontier model development cycles from months to weeks. More significantly, this chip delivers a 2.8x performance improvement per dollar compared to the 7th generation Ironwood TPU. For users requiring high-performance computing without wanting to bear excessive operational costs, this improvement is highly attractive.

TPU 8i: Inference Focus

The TPU 8i is better suited for inference tasks—running trained AI models and handling AI agent workloads. This chip incorporates 384MB of SRAM, triple that of Ironwood, significantly reducing inference latency and improving response speed.

Competitive Landscape: Tech Giants Converge on Nvidia

Google’s move represents the latest attempt by tech giants to challenge Nvidia’s dominance in the AI chip market.

Looking back, Google has long been invested in AI chips. As early as 2015, Google began using custom processors to run AI models; in 2018, the company started renting these chips to cloud customers. According to DA Davidson analysts, Google’s TPU business combined with its DeepMind team is valued at approximately $900 billion.

Meanwhile, other tech giants are accelerating their:

Amazon released the Inferentia chip for AI request processing in 2018 and launched the Trainium chip for training purposes in 2020.

This week, Amazon announced an expanded partnership with AI company Anthropic, with the latter committing to invest over $100 billion in AWS over the next decade, purchasing Trainium chips and tens of millions of Graviton CPU cores, locking in up to 5 gigawatts of computing power.

Meta is developing its own AI chips and announced last week it is collaborating with Broadcom to develop multiple chip products.

Microsoft released its second-generation custom AI chip in January this year.

Nvidia’s Position Remains Solid

Despite intensifying competition, Nvidia’s market position remains difficult to displace in the short term.

Notably, Google did not make direct performance comparisons between its new chips and Nvidia’s products. Google only stated that TPU 8t delivers 2.8x performance per dollar compared to the 7th generation Ironwood TPU, with the inference chip showing an 80% performance improvement.

In March, Nvidia announced its next-generation chip plans. The chip utilizes technology acquired from chip startup Groq for $20 billion and can enable models to respond to users faster. Nvidia stated that its upcoming Groq 3 LPU chip will extensively use static random-access memory (SRAM) technology, which Google’s TPU 8i also relies on, featuring 384MB of SRAM per chip.

Industry Significance

From a broader perspective, Google’s decision to separate training and inference chips reflects structural changes occurring in the AI industry.

As AI applications transition from laboratories to large-scale deployment, demands for computing power across different scenarios are becoming increasingly differentiated. Training tasks require high throughput and large memory bandwidth, while inference tasks prioritize low latency and high energy efficiency. Separating these tasks into dedicated hardware enables more optimal resource allocation.

This trend will also drive the entire AI chip industry toward greater specialization and diversification. For enterprise users, diversified choices help reduce supply chain risks and costs; for the industry, competition will accelerate technological innovation and performance improvements.

Summary

The release of Google’s TPU 8t/8i represents a significant milestone in the development of AI chips. It not only demonstrates Google’s continued investment in AI infrastructure but also reflects the industry’s evolution toward greater refinement and specialization.

Although Nvidia will remain the AI chip market leader in the near term, the sustained efforts of tech giants like Google, Amazon, Meta, and Microsoft are injecting more vitality into this field. The future competition in AI computing power will not merely be a technological race but a comprehensive contest involving ecosystems and user experience.